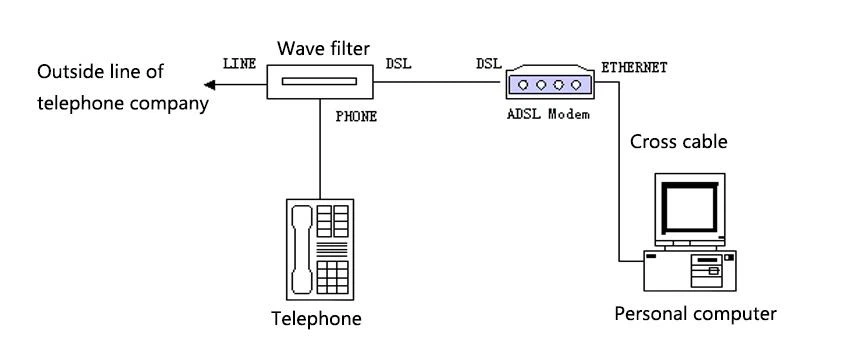

Speed measures response time in a network, and response time will also be impacted by factors like latency and packet loss rates. In reality, bandwidth is one of the many factors that contribute to network speed. Although the ability to send more data might seem to improve network speeds, increasing bandwidth doesn’t increase the speed of packet transmission. In fact, if your bandwidth is increased, the only difference is a higher amount of data can be transmitted at any given time. While this may make for a convincing advertisement, high bandwidth availability doesn’t necessarily translate into high speeds. This misconception is often perpetuated by internet service providers peddling the idea that high-speed services are facilitated by maximum bandwidth availability. Sometimes, bandwidth is confused with speed, but this is a widespread misconception. If, for example, the network throughput is being impacted by packet loss, jitter, or latency, your network is likely to experience delays even if you have high bandwidth availability. High bandwidth doesn’t necessarily guarantee optimal network performance. Bandwidth can also be measured in gigabits per second (Gbps) and megabits per second (Mbps). Like throughput, the unit of measurement for bandwidth is bits per second (bps). For instance, if a network has high bandwidth, this means a higher amount of data can be transmitted and received. What Is Bandwidth?īandwidth refers to the amount of data that can be transmitted and received during a specific period of time. For example, if your network administrator finds throughput is low, this may indicate a packet loss issue, which often results in low-quality VoIP calls. When we measure network throughput, an average is taken and is often considered to be an accurate representation of overall network performance. This is used interchangeably with data packets per second, which also represents throughput. In most cases, the unit of measurement for network throughput metric is bits per second (bps). It’s essential for businesses to monitor throughput on their networks, because it helps them gain real-time network performance visibility and improve their understanding of packet delivery rates. If too many packets are getting lost during transmission, then network performance is likely to be insufficient. To achieve a high level of performance, packets must be able to reach the correct destination. The average throughput of data on a network gives users insight into the number of packets successfully arriving at the correct destination.

Rather than measuring theoretical delivery of packets, throughput provides a practical measurement of the actual delivery of packets. This means the throughput metric measures the average rate at which transmitted messages successfully arrive at the relevant destination. In the simplest terms, throughput refers to the amount of data able to be transmitted and received during a specific time period. Skip to network monitoring tools > What Is Throughput? These SolarWinds solutions are designed to be easy to use, scalable, and can help you implement a comprehensive network performance monitoring strategy. If you’re looking for a tool to help you monitor these metrics and improve your network performance, we recommend SolarWinds ® Network Bandwidth Analyzer Pack, Network Performance Monitor, and NetFlow Traffic Analyzer.

bandwidth, this guide will also provide a definition of each of these terms.

In addition to explaining the difference between throughput vs. Despite this, there are distinct differences between these three terms and how they can be used to monitor network performance.

They are also sometimes mistakenly used interchangeably. latency can often lead to confusion among individuals and businesses, because latency, throughput, and bandwidth share some key similarities.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed